Deferred Rendering: Geometry

- 2.19.1. Overview

- 2.19.2. Groups

- 2.19.3. Geometry Buffer

- 2.19.4. Algorithm

- 2.19.5. Ordering/Batching

- 2.19.6. Normal Compression

Overview

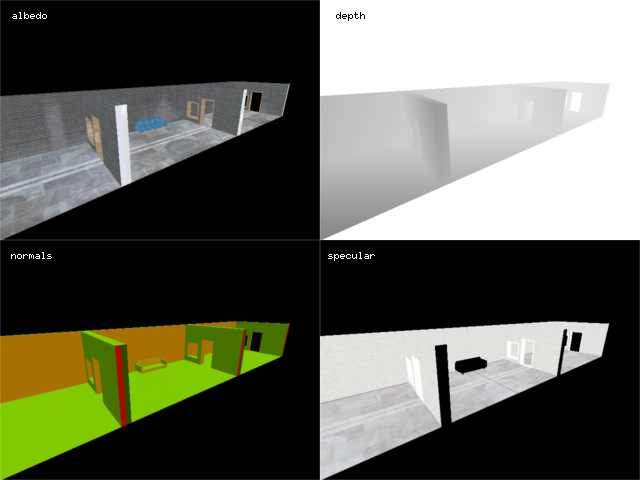

The first step in deferred rendering involves rendering all opaque instances in the current scene to a geometry buffer. This populated geometry buffer is then primarily used in later stages to calculate lighting, but can also be used to implement effects such as screen-space ambient occlusion and emission.

In the r2 package, the primary implementation of the deferred geometry rendering algorithm is the R2GeometryRenderer type.

Groups

Groups are a simple means to constrain the contributions of sets of specific light sources to sets of specific rendered instances. Instances and lights are assigned a group number in the range [1, 15]. If the programmer does not explicitly assign a number, the number 1 is assigned automatically. During rendering, the group number of each rendered instance is written to the stencil buffer. Then, when the light contribution is calculated for a light with group number n, only those pixels that have a corresponding value of n in the stencil buffer are allowed to be modified.

Geometry Buffer

A geometry buffer is a render target in which the surface attributes of objects are stored prior to being combined with the contents of a light buffer to produce a lit image.

One of the main implementation issues in any deferred renderer is deciding which surface attributes (such as position, albedo, normals, etc) to store and which to reconstruct. The more attributes that are stored, the less work is required during rendering to reconstruct those values. However, storing more attributes requires a larger geometry buffer and more memory bandwidth to actually populate that geometry buffer during rendering. The r2 package leans towards having a more compact geometry buffer and doing slightly more reconstruction work during rendering.

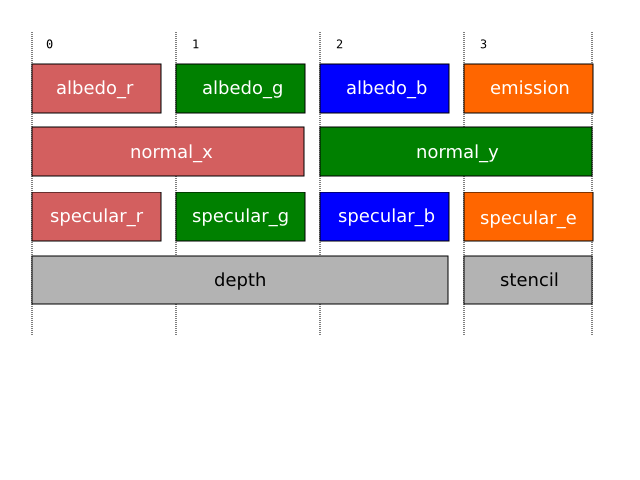

The r2 package explicitly stores the albedo, normals, emission level, and specular color of surfaces. Additionally, the depth buffer is sampled to recover the depth of surfaces. The eye-space positions of surfaces are recovered via an efficient position reconstruction algorithm which uses the current viewing projection and logarithmic depth value as input. In order to reduce the amount of storage required, three-dimensional eye-space normal vectors are stored compressed as two 16 half-precision floating point components via a simple mapping. This means that only 32 bits are required to store the vectors, and very little precision is lost. The precise format of the geometry buffer is as follows:

The albedo_r, albedo_g, and albedo_b components correspond to the red, green, and blue components of the surface, respectively. The emission component refers to the surface emission level. The normal_x and normal_y components correspond to the two components of the compressed surface normal vector. The specular_r, specular_g, and specular_b components correspond to the red, green, and blue components of the surface specularity. Surfaces that will not receive specular highlights simply have 0 for each component. The specular_e component holds the surface specular exponent divided by 256.

In the r2 package, geometry buffers are instances of R2GeometryBufferType.

Algorithm

An informal description of the geometry rendering algorithm as implemented in the r2 package is as follows:

- Set the current render target to a geometry buffer b.

- Enable writing to the depth and stencil buffers, and enable stencil testing. Enable depth testing such that only pixels with a depth less than or equal to the current depth are touched.

- For each group g:

- Configure stencil testing such that only pixels with the allow bit enabled are touched, and configure stencil writing such that the index of g is recorded in the stencil buffer.

- For each instance o in g:

- Render the surface albedo, eye space normals, specular color, and emission level of o into b. Normal mapping is performed during rendering, and if o does not have specular highlights, then a pure black (zero intensity) specular color is written. Effects such as environment mapping are considered to be part of the surface albedo and so are performed in this step.

Ordering/Batching

Due to the use of depth testing, the geometry rendering algorithm is effectively order independent: Instances can be rendered in any order and the final image will always be the same. However, there are efficiency advantages in rendering instances in a particular order. The most efficient order of rendering is the one that minimizes internal OpenGL state changes. NVIDIA's Beyond Porting presentation gives the relative cost of OpenGL state changes, from most expensive to least expensive, as [18]:

- Render target changes: 60,000/second

- Program bindings: 300,000/second

- Texture bindings: 1,500,000/second

- Vertex format (exact cost unspecified)

- UBO bindings (exact cost unspecified)

- Vertex Bindings (exact cost unspecified)

- Uniform Updates: 10,000,000/second

Therefore, it is beneficial to order rendering operations such that the most expensive state changes happen the least frequently.

The R2SceneOpaquesType type provides a simple interface that allows the programmer to specify instances without worrying about ordering concerns. When all instances have been submitted, they will be delivered to a given consumer (typically a geometry renderer) via the opaquesExecute method in the order that would be most efficient for rendering. Typically, this means that instances are first batched by shader, because switching programs is the second most expensive type of render state change. The shader-batched instances are then batched by material, in order to reduce the number of uniform updates that need to occur per shader.

Normal Compression

The r2 package uses a Lambert azimuthal equal-area projection to store surface normal vectors in two components instead of three. This makes use of the fact that normalized vectors represent points on the unit sphere. The mapping from normal vectors to two-dimensional spheremap coordinates is given by compress NormalCompress.hs:

module NormalCompress where

import qualified Vector3f

import qualified Vector2f

import qualified Normal

compress :: Normal.T -> Vector2f.T

compress n =

let p = sqrt ((Vector3f.z n * 8.0) + 8.0)

x = (Vector3f.x n / p) + 0.5

y = (Vector3f.y n / p) + 0.5

in Vector2f.V2 x yThe mapping from two-dimensional spheremap coordinates to normal vectors is given by decompress NormalDecompress.hs:

module NormalDecompress where

import qualified Vector3f

import qualified Vector2f

import qualified Normal

decompress :: Vector2f.T -> Normal.T

decompress v =

let fn = Vector2f.V2 ((Vector2f.x v * 4.0) - 2.0) ((Vector2f.y v * 4.0) - 2.0)

f = Vector2f.dot2 fn fn

g = sqrt (1.0 - (f / 4.0))

x = (Vector2f.x fn) * g

y = (Vector2f.y fn) * g

z = 1.0 - (f / 2.0)

in Vector3f.V3 x y z[18]

For some reason, the presentation does not specify a publication date. However, inspection of the presentation's metadata suggests that it was written in October 2014, so the numbers given are likely for reasonably high-end 2014-era hardware.